Chapter 3 Test Fairness

3.1 Introduction

The 2014 Standards for Educational and Psychological Testing (AERA, APA, & NCME, 2014, p. 49) indicate that fairness to all individuals in the intended population is an overriding and fundamental validity concern. “The central idea of fairness in testing is to identify and remove construct-irrelevant barriers to maximal performance for any examinee (Standards, 2014, p. 63).

The term fairness has no single technical meaning and is used in many ways in public discourse. Issues that influence test fairness include, but are not limited to:

- the definition of the construct—the knowledge, skills, and abilities—that’s intended to be measured;

- the development of items and tasks that are explicitly designed to assess the construct that is the target of measurement;

- the delivery of items and tasks in a manner that enables students to demonstrate their achievement of the construct; and

- how student responses to items and tasks are captured and scored.

Although the content in this chapter addresses these issues, the reader may be directed to other, or additional, resources that provide evidence for test fairness. Most elements in the Smarter Balanced approach to test fairness apply to the entire assessment system, including the summative and interim assessments.

The interim assessments use the same content specifications as the summative assessments. The knowledge, skills, and abilities assessed by Smarter Balanced tests and their relationship to the CCSS are described in the Content Specifications for the summative assessment (2017a,b). These documents describe the major constructs—identified as “claims”—within ELA/literacy and mathematics for which evidence of student achievement is gathered and are the basis for reporting student performance.

Students have access to the same accessibility resources on the interim assessments that are available on the summative assessments. These resources include universal tools, designated supports, and accommodations. These resources are described in the Guidelines (Smarter Balanced 2018b), in this chapter, and in a similar chapter in the summative technical report (Smarter Balanced, 2019a). It is important to note that the inferences that may be made from test results should in no way be influenced by the use or nonuse of accessibility resources. In contrast, the term “modifications” typically refers to differences from standard conditions that may affect the meaning of test scores and should therefore be reported, along with test results.

3.1.1 Attention to Bias and Sensitivity in Test Development

According to the Standards, bias is “construct underrepresentation or construct-irrelevant components of test scores that differentially affect the performance of different groups of test takers and consequently the reliability/precision and validity of interpretations and uses of their test scores” (AERA, APA, & NCME, 2014, p. 216). “Sensitivity” refers to an awareness of the need to avoid explicit bias in assessment. In common usage, reviews of tests for bias and sensitivity help ensure that test items and stimuli are fair for various groups of test takers (AERA, APA, & NCME, 2014, p. 64).

The goal of fairness in assessment is to assure that test materials are as free as possible from unnecessary barriers to the success of diverse groups of students. Smarter Balanced developed the Bias and Sensitivity Guidelines (ETS, 2012) to help ensure that the assessments are fair for all groups of test takers, despite differences in characteristics including, but not limited to, disability status, ethnic group, gender, regional background, native language, race, religion, sexual orientation, and socioeconomic status. Unnecessary barriers can be reduced by following some fundamental rules:

- measuring only knowledge or skills that are relevant to the intended construct;

- not angering, offending, upsetting, or otherwise distracting test takers; and

- treating all groups of people with appropriate respect in test materials.

These rules help ensure that the test content is fair for test takers and acceptable to the many stakeholders and constituent groups within Smarter Balanced member organizations. Fairness must be considered in all phases of test development and use. Smarter Balanced strongly relied on the Bias and Sensitivity Guidelines in the development and design phases of the Smarter Balanced assessments, including the training of item writers, item writing, and review. Smarter Balanced’s focus and attention on bias and sensitivity comply with Chapter 3 of the Standards, which states: “Test developers are responsible for developing tests that measure the intended construct and for minimizing the potential for tests being affected by construct-irrelevant characteristics such as linguistic, communicative, cognitive, cultural, physical, or other characteristics” (AERA, APA, & NCME, 2014, p. 64).

3.2 The Smarter Balanced Accessibility and Accommodations Framework

Smarter Balanced has built a framework of accessibility for all students, including English Learners (ELs), students with disabilities, and ELs with disabilities, but not limited to those groups. Three resources—the Smarter Balanced Item and Task Specifications Bibliography (2016f), the Smarter Balanced Accessibility and Accommodations Framework (2016e), and the Smarter Balanced Bias and Sensitivity Guidelines (ETS, 2012)—are used to guide the development of the assessments, items, and tasks to ensure that they accurately measure the targeted constructs. Recognizing the diverse characteristics and needs of students who participate in the Smarter Balanced assessments, the states worked together through the Smarter Balanced test administration and student access workgroup to develop the Accessibility and Accommodations Framework (Smarter Balanced, 2016e) that guided the Consortium as it worked to reach agreement on the specific universal tools, designated supports, and accommodations available for the assessments. This work also incorporated research and practical lessons learned through universal design, accessibility tools, and accommodations (Thompson, Johnstone, & Thurlow, 2002).

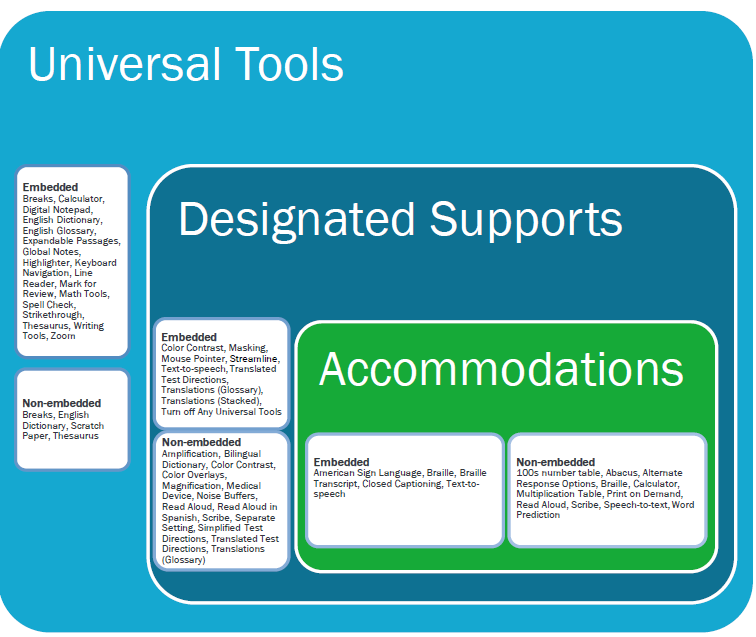

In the process of developing its next-generation assessments to measure students’ knowledge and skills as they progress toward college and career readiness, Smarter Balanced recognized that the validity of assessment results depends on each student having appropriate universal tools, designated supports, and accommodations when needed, based on the constructs being measured by the assessment. The Smarter Balanced assessment system uses technology intended to deliver assessments that meet the needs of individual students to help ensure that the test is fair. Online/electronic delivery of the assessments helps ensure that students are administered a test that can meet their unique needs for accessibility while still measuring the same construct. During the administration of tests, items and tasks are delivered using a variety of accessibility resources and accommodations that can be administered to students automatically based on their individual profiles. Accessibility resources include, but are not limited to, foreground and background color flexibility, tactile presentation of content (e.g., braille), and translated presentation of assessment content in signed form and selected spoken languages. The complete list of universal tools, designated supports, and accommodations with a description of each and recommendations for use can be found in the Usability, Accessibility, and Accommodations Guidelines (Smarter Balanced, 2018b). The conceptual model underlying the guidelines is shown in Figure 3.1.

Figure 3.1: Conceptual Model Underlying the Smarter Balanced Usability, Accessibility, and Accommodations Guidelines

Smarter Balanced adopted a common set of universal tools, designated supports, and accommodations. As a starting point, Smarter Balanced surveyed all members to determine their past practices. From these data, Smarter Balanced worked with members and used a deliberative analysis strategy as described in Accommodations for English Language Learners and Students with Disabilities: A Research-Based Decision Algorithm (Abedi & Ewers, 2013) to determine which accessibility resources should be made available during the assessment and whether access to these resources should be moderated by an adult. As a result, some accessibility resources that states traditionally had identified as accommodations were instead embedded in the test or otherwise incorporated into the Smarter Balanced assessments as universal tools or designated supports. Other resources were not incorporated into the assessment because access to these resources was not grounded in research or was determined to interfere with the construct measured. The final list of accessibility resources and the recommended use of the resources can be found in the Usability, Accessibility, and Accommodations Guidelines (2018b).

A fundamental goal was to design an assessment that is accessible for all students, regardless of English language proficiency, disability, or other individual circumstances. The three components (universal tools, designed supports, and accommodations) of the Accessibility and Accommodations Framework are designed to meet that need. The intent was to:

- Design and develop items and tasks to ensure that all students have access to the items and tasks designed to measure the targeted constructs. In addition, deliver items, tasks, and the collection of student responses in a way that maximizes validity for each student.

- Adopt the conceptual model embodied in the Accessibility and Accommodations Framework that describes accessibility resources of digitally delivered items/tasks and acknowledges the need for some adult-monitored accommodations. The model also characterizes accessibility resources as a continuum from those available to all students ranging to ones that are implemented under adult supervision available only to students with a documented need.

- Implement the use of an individualized and systematic needs profile for students, or Individual Student Assessment Accessibility Profile (ISAAP), that promotes the provision of appropriate access and tools for each student. Smarter created an ISAAP process that helps education teams systematically select the most appropriate accessibility resources for each student and the ISAAP tool, which helps teams note the accessibility resources chosen.

The conceptual framework that serves as the basis underlying the Usability, Accessibility, and Accommodations Guidelines is shown in Figure 3.1. This figure portrays several aspects of the Smarter Balanced assessment resources—universal tools (available for all students), designated supports (available when indicated by an adult or team), and accommodations (as documented in an Individualized Education Program or 504 plan). It also displays the additive and sequentially inclusive nature of these three aspects. Universal tools are available to all students, including those receiving designated supports and those receiving accommodations. Designated supports are available only to students who have been identified as needing these resources (as well as those students for whom the need is documented). Accommodations are available only to those students with documentation of the need through a formal plan (e.g., IEP, 504). Those students also may access designated supports and universal tools.

A universal tool or a designated support may also be an accommodation, depending on the content target and grade. This approach is consistent with the emphasis that Smarter Balanced has placed on the validity of assessment results coupled with access. Universal tools, designated supports, and accommodations are all intended to yield valid scores. Also shown in Figure 3.1 are the universal tools, designated supports, and accommodations for each category of accessibility resources. Accessibility resources may be embedded or non-embedded; the distinction is based on how the resource is provided (either within digitally delivered components of the test or outside of the test delivery system).

The specific universal tools, designated supports, and accommodations approved by Smarter Balanced may change in the future if additional tools, supports, or accommodations are identified for the assessment based on member experience and research findings. The Consortium has established a standing committee, including representatives from governing members that review suggested additional universal tools, designated supports, and accommodations to determine if changes are warranted. Proposed changes to the list of universal tools, designated supports, and accommodations are brought to governing members for review, input, and vote for approval.

3.3 Meeting the Needs of Traditionally Underrepresented Populations

A policy decision was made during the development of Smarter Balanced assessments to make accessibility resources available to all students based on need rather than eligibility status or demographic group categorical designation. This decision reflects a belief among Consortium states that unnecessarily restricting access to accessibility resources threatens the validity of the assessment results and places students under undue stress and frustration. Additionally, accommodations are available for students who qualify for them. The Consortium utilizes a needs-based approach to providing accessibility resources. A description as to how this benefits ELs, students with disabilities, and ELs with disabilities is presented here.

3.3.1 Students Who Are ELs

Students who are ELs have needs that are different from students with disabilities, including language-related disabilities. The needs of ELs are not the result of a language-related disability, but instead are specific to the student’s current level of English language proficiency. The needs of ELs are diverse and are influenced by the interaction of several factors, including their current level of English language proficiency, their prior exposure to academic content and language in their native language, the languages to which they are exposed outside of school, the length of time they have participated in the U.S. education system, and the language(s) in which academic content is presented in the classroom. Given the unique background and needs of each student, the conceptual framework is designed to focus on students as individuals and to provide several accessibility resources that can be combined in a variety of ways. Some of these digital tools, such as using a highlighter to highlight key information and an audio presentation of test navigation features, are available to all students, including those at various stages of English language development. Other tools, such as the audio presentation of items and glossary definitions in English, may also be assigned to any student, including those at various stages of English language development. Still, other tools, such as embedded glossaries that present translations of construct irrelevant terms, are intended for those students whose prior language experiences would allow them to benefit from translations into another language. Collectively, the conceptual framework for usability, accessibility, and accommodations embraces a variety of accessibility resources that have been designed to meet the needs of students at various stages in their English language development.

3.3.2 Students with Disabilities

Federal law (Individuals with Disabilities Education Act, 2004) requires that students with disabilities and a documented need receive accommodations that address those needs and that they participate in assessments. The intent of the law is to ensure that all students have appropriate access to instructional materials and are held to the same high standards. When students are assessed, the law ensures that students receive appropriate accommodations during testing so they can appropriately demonstrate what they know and so their achievement is measured accurately.

The Accessibility and Accommodations Framework (Smarter Balanced, 2016e) addresses the needs of students with disabilities in three ways. First, it provides for the use of digital test items that are purposefully designed to contain multiple forms of the item, each developed to address a specific access need. By allowing the delivery of a given item to be tailored based on each student’s accommodation, the Framework fulfills the intent of federal accommodation legislation. Embedding universal accessibility digital tools, however, addresses only a portion of the access needs required by many students with disabilities. Second, by embedding accessibility resources in the digital test delivery system, additional access needs are met. This approach fulfills the intent of the law for many, but not all, students with disabilities by allowing the accessibility resources to be activated for students based on their needs. Third, by allowing for a wide variety of digital and locally provided accommodations (including physical arrangements), the Framework addresses a spectrum of accessibility resources appropriate for mathematics and ELA/literacy assessment. Collectively, the Framework adheres to federal regulations by allowing a combination of universal design principles, universal tools, designated supports, and accommodations to be embedded in a digital delivery system and assigned and provided based on individual student needs. Therefore, a student with a disability benefits from the system because they may be eligible to access resources from any of the three categories as necessary to create an assessment tailored to their individual need.

3.4 The Individual Student Assessment Accessibility Profile (ISAAP)

Typical practice frequently required schools and educators to document, a priori, the need for specific student accommodations and then to document the use of those accommodations after the assessment. For example, most programs require schools to document a student’s need for a large-print version of a test for delivery to the school. Following the test administration, the school documented (often by bubbling in information on an answer sheet) which of the accommodations, if any, a given student received, whether the student actually used the large-print form, and whether any other accommodations were provided.

Universal tools are available by default in the Smarter Balanced test delivery system. These tools can be deactivated if they create an unnecessary distraction for the student. Embedded designated supports and accommodations must be enabled by an informed educator or group of educators with the student when appropriate. To facilitate the decision-making process around individual student accessibility needs, Smarter Balanced has established an Individual Student Assessment Accessibility Profile (ISAAP) tool. The ISAAP tool is designed to facilitate selection of the universal tools, designated supports, and accommodations that match student access needs for the Smarter Balanced assessments, as supported by the Smarter Balanced Usability, Accessibility, and Accommodations Guidelines (Smarter Balanced, 2018b). Smarter Balanced recommends that the ISAAP tool5 be used in conjunction with the Smarter Balanced Usability, Accessibility, and Accommodations Guidelines and state regulations and policies related to assessment accessibility as a part of the ISAAP process. For students requiring one or more accessibility resources, schools are able to document this need prior to test administration. Furthermore, the ISAAP can include information about universal tools that may need to be eliminated for a given student. By documenting needs prior to test administration, a digital delivery system is able to activate the specified options when the student logs in to an assessment. In this way, the profile permits school-level personnel to focus on each individual student, documenting the accessibility resources required for valid assessment of that student in a way that is efficient to manage.

The conceptual framework shown in Figure 3.1 provides a structure that assists in identifying which accessibility resources should be made available for each student. In addition, the conceptual framework is designed to differentiate between universal tools available to all students and accessibility resources that must be assigned before the administration of the assessment. Consistent with recommendations from Shafer and Rivera (2011); Thurlow, Quenemoen, and Lazarus (2011); Fedorchak (2012); and Russell (2011), Smarter Balanced is encouraging school-level personnel to use a team approach to make decisions concerning each student’s ISAAP. Input from individuals with multiple perspectives, including the student, will likely result in appropriate decisions about the assignment of accessibility resources. Multiple perspectives will also avoid selecting too many accessibility resources for a student. The use of too many unneeded accessibility resources can be distracting to the student and decrease the student’s performance.

3.5 Usability, Accessibility, and Accommodations Guidelines

Smarter Balanced (2018b) developed the Usability, Accessibility, and Accommodations Guidelines (UAAG) for its members to guide the selection and administration of universal tools, designated supports, and accommodations. All ICAs and IABs are fully accessible and offer all accessibility resources as appropriate by grade and content area, including ASL, braille, and Spanish. It is intended for school-level personnel and decision-making teams, particularly Individualized Education Program (IEP) teams, as they prepare for and implement the Smarter Balanced summative and interim assessments. The UAAG provides information for classroom teachers, English development educators, special education teachers, and related services personnel to select and administer universal tools, designated supports, and accommodations for those students who need them. The UAAG is also intended for assessment staff and administrators who oversee the decisions that are made in instruction and assessment. It emphasizes an individualized approach to the implementation of assessment practices for those students who have diverse needs and participate in large-scale assessments. This document focuses on universal tools, designated supports, and accommodations for the Smarter Balanced summative and interim assessments in ELA/literacy and mathematics. At the same time, it supports important instructional decisions about accessibility for students. It recognizes the critical connection between accessibility in instruction and accessibility during assessment. The UAAG is also incorporated into the Smarter Balanced Online Test Administration Manual (Smarter Balanced, 2018c).

3.6 Differential Item Functioning (DIF)

DIF analyses are used to identify items that have different measurement properties for students in different demographic groups who are matched on overall achievement. Information about DIF and the procedures for reviewing items flagged for DIF is a component of validity evidence associated with the internal properties of the test.

3.6.1 Method of Assessing DIF

Differential Item Functioning (DIF) analyses are performed on items using data gathered in field testing. In a DIF analysis, the performance on an item by two groups that are similar in achievement but differ demographically are compared. In general, the two groups are called the focal and reference groups. The focal group is usually a minority group (e.g., Hispanics), while the reference group is usually a contrasting majority group (e.g., Caucasian) or all students that are not part of the focal group demographic. Table 3.1 shows the focal and reference groups for the DIF analyses performed by Smarter Balanced.

| Group Type | Focal Group | Reference Group |

|---|---|---|

| Gender | Female | Male |

| Ethnicity | African American | White |

| Asian/Pacific Islander | ||

| Native American/Alaska Native | ||

| Hispanic | ||

| Special Populations | Limited English Proficient (LEP) | English Proficient |

| Individualized Education Program (IEP) | No IEP | |

| Title 1 (Economically disadvantaged) | Not Title 1 |

Items are classified into three DIF categories of “A” (negligible), “B” (slight or moderate), or “C” (moderate to large), according to the observed degree of DIF for a particular focal-reference comparison. The letters are also signed with positive values meaning that the focal group performs better on the item than the reference group and negative values indicating the reference group does better on the item than the focal group. For details on the method of computing DIF statistics and criteria for flagging items by their degree of DIF, please see the current Smarter Balanced summative technical report (Smarter Balanced, 2019). DIF analyses may not be carried out, or may not be capable of detecting DIF, if the sample size for either the reference group or the focal group is too small.

Items flagged for C-level DIF are subsequently reviewed by content experts and bias/sensitivity committees to determine the source and meaning of performance differences. An item flagged for C-level DIF may be measuring something different from the intended construct. However, it is important to recognize that DIF-flagged items might be related to actual differences in relevant knowledge and skills or may have been flagged due to chance variation in the DIF statistic (known as statistical type I error). As Cole and Zieky (2001, p. 375) noted, “If the members of the measurement community currently agree on any aspect of fairness, it is that score differences alone are not proof of bias.”

3.7 DIF Analysis Results for Interim Assessment Items

A relatively small number of items on the interim assessments showed performance differences between student groups as indicated by C-DIF flagging criteria. Table 3.2 and Table 3.3 show the number of items flagged for all categories of DIF for ELA/literacy and mathematics in grades 3 to 8 and 11 for the ICAs. Table 3.4 and Table 3.5 show the number of items flagged for all categories of DIF for ELA/literacy and mathematics in grades 3 to 8 and 11 for the IABs. Category “N/A” DIF represents items for which sample sizes did not meet the minimum requirement for estimating DIF.

All items on the interim assessments underwent and passed bias and sensitivity reviews before they were even field tested. After DIF statistics were obtained for these items, qualified subject matter and bias sensitivity experts reviewed items classified into category C of DIF. Only items approved by a multi-disciplinary panel of content and sensitivity experts are eligible to be used on Smarter Balanced assessments.

| Grade | DIF Category | Female Male | Asian White | Black White | Hiapanic White | Native American White | IEP Non-IEP | LEP Non-LEP | Title 1 Non-Title 1 |

|---|---|---|---|---|---|---|---|---|---|

| 3 | N/A | 2 | 5 | 3 | 2 | 17 | 2 | 2 | 2 |

| A | 44 | 39 | 44 | 45 | 30 | 45 | 45 | 46 | |

| B- | 0 | 1 | 1 | 1 | 1 | 0 | 1 | 0 | |

| B+ | 2 | 3 | 0 | 0 | 0 | 1 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 4 | N/A | 2 | 2 | 2 | 2 | 15 | 2 | 2 | 2 |

| A | 46 | 46 | 47 | 47 | 32 | 46 | 47 | 47 | |

| B- | 0 | 1 | 0 | 0 | 2 | 1 | 0 | 0 | |

| B+ | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 5 | N/A | 2 | 3 | 2 | 2 | 20 | 2 | 2 | 2 |

| A | 45 | 43 | 44 | 43 | 25 | 46 | 45 | 46 | |

| B- | 0 | 2 | 2 | 2 | 2 | 0 | 1 | 0 | |

| B+ | 1 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 6 | N/A | 2 | 5 | 3 | 2 | 18 | 2 | 4 | 2 |

| A | 44 | 43 | 46 | 47 | 30 | 46 | 45 | 47 | |

| B- | 0 | 0 | 0 | 0 | 1 | 1 | 0 | 0 | |

| B+ | 2 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 7 | N/A | 2 | 3 | 3 | 2 | 16 | 3 | 3 | 2 |

| A | 44 | 44 | 43 | 47 | 33 | 46 | 46 | 47 | |

| B- | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| B+ | 2 | 2 | 3 | 0 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 8 | N/A | 2 | 2 | 2 | 2 | 13 | 2 | 2 | 2 |

| A | 45 | 45 | 47 | 47 | 35 | 48 | 47 | 48 | |

| B- | 0 | 1 | 0 | 1 | 1 | 0 | 0 | 0 | |

| B+ | 2 | 2 | 1 | 0 | 1 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | |

| C+ | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 11 | N/A | 2 | 2 | 2 | 2 | 34 | 2 | 3 | 2 |

| A | 42 | 42 | 43 | 43 | 12 | 41 | 42 | 44 | |

| B- | 1 | 1 | 0 | 1 | 0 | 2 | 0 | 0 | |

| B+ | 1 | 1 | 1 | 0 | 0 | 1 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| Grade | DIF Category | Female Male | Asian White | Black White | Hiapanic White | Native American White | IEP Non-IEP | LEP Non-LEP | Title 1 Non-Title 1 |

|---|---|---|---|---|---|---|---|---|---|

| 3 | N/A | 0 | 0 | 0 | 0 | 30 | 0 | 0 | 0 |

| A | 37 | 34 | 34 | 35 | 7 | 37 | 36 | 37 | |

| B- | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | |

| B+ | 0 | 3 | 2 | 1 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 4 | N/A | 0 | 1 | 0 | 0 | 6 | 0 | 0 | 0 |

| A | 35 | 33 | 35 | 36 | 28 | 36 | 36 | 36 | |

| B- | 0 | 1 | 0 | 0 | 2 | 0 | 0 | 0 | |

| B+ | 1 | 1 | 1 | 0 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 5 | N/A | 0 | 0 | 0 | 0 | 10 | 0 | 0 | 0 |

| A | 37 | 32 | 36 | 37 | 27 | 37 | 36 | 37 | |

| B- | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 0 | |

| B+ | 0 | 4 | 1 | 0 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 6 | N/A | 0 | 0 | 0 | 0 | 35 | 0 | 0 | 0 |

| A | 36 | 36 | 36 | 36 | 1 | 36 | 36 | 36 | |

| B- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| B+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 7 | N/A | 0 | 0 | 0 | 0 | 37 | 0 | 0 | 0 |

| A | 35 | 35 | 37 | 36 | 0 | 34 | 35 | 37 | |

| B- | 1 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | |

| B+ | 1 | 2 | 0 | 1 | 0 | 3 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 8 | N/A | 0 | 2 | 0 | 0 | 36 | 0 | 0 | 0 |

| A | 37 | 29 | 36 | 35 | 1 | 36 | 36 | 37 | |

| B- | 0 | 3 | 0 | 2 | 0 | 0 | 0 | 0 | |

| B+ | 0 | 2 | 1 | 0 | 0 | 1 | 1 | 0 | |

| C- | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 11 | N/A | 0 | 3 | 1 | 0 | 38 | 2 | 3 | 0 |

| A | 36 | 32 | 35 | 38 | 0 | 34 | 34 | 38 | |

| B- | 1 | 1 | 1 | 0 | 0 | 0 | 1 | 0 | |

| B+ | 1 | 1 | 1 | 0 | 0 | 2 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 |

| Grade | DIF Category | Female Male | Asian White | Black White | Hiapanic White | Native American White | IEP Non-IEP | LEP Non-LEP | Title 1 Non-Title 1 |

|---|---|---|---|---|---|---|---|---|---|

| 3 | N/A | 0 | 20 | 13 | 0 | 66 | 11 | 6 | 0 |

| A | 115 | 93 | 103 | 114 | 50 | 103 | 109 | 119 | |

| B- | 1 | 3 | 2 | 5 | 4 | 2 | 4 | 1 | |

| B+ | 4 | 4 | 2 | 0 | 0 | 4 | 1 | 0 | |

| C- | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 4 | N/A | 0 | 17 | 5 | 0 | 58 | 5 | 2 | 0 |

| A | 114 | 95 | 111 | 113 | 57 | 110 | 111 | 116 | |

| B- | 0 | 2 | 0 | 2 | 2 | 2 | 2 | 1 | |

| B+ | 2 | 2 | 1 | 1 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 1 | 0 | 0 | 2 | 0 | |

| C+ | 1 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 5 | N/A | 0 | 22 | 8 | 0 | 65 | 7 | 8 | 0 |

| A | 109 | 89 | 104 | 111 | 49 | 108 | 102 | 113 | |

| B- | 3 | 4 | 4 | 3 | 2 | 1 | 4 | 2 | |

| B+ | 4 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C- | 0 | 0 | 0 | 2 | 0 | 0 | 2 | 1 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 6 | N/A | 0 | 15 | 4 | 0 | 77 | 4 | 7 | 0 |

| A | 108 | 92 | 105 | 109 | 38 | 106 | 100 | 112 | |

| B- | 3 | 5 | 2 | 3 | 0 | 2 | 5 | 3 | |

| B+ | 3 | 2 | 4 | 2 | 0 | 2 | 1 | 0 | |

| C- | 1 | 1 | 0 | 1 | 0 | 1 | 2 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 7 | N/A | 0 | 14 | 7 | 2 | 67 | 7 | 10 | 0 |

| A | 112 | 99 | 108 | 112 | 52 | 108 | 105 | 118 | |

| B- | 3 | 2 | 1 | 3 | 0 | 3 | 4 | 1 | |

| B+ | 3 | 4 | 2 | 1 | 0 | 1 | 0 | 0 | |

| C- | 1 | 0 | 1 | 1 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 8 | N/A | 0 | 9 | 4 | 0 | 34 | 5 | 8 | 0 |

| A | 85 | 77 | 85 | 85 | 55 | 84 | 78 | 90 | |

| B- | 1 | 1 | 0 | 4 | 1 | 1 | 2 | 0 | |

| B+ | 4 | 1 | 1 | 1 | 0 | 0 | 1 | 0 | |

| C- | 0 | 2 | 0 | 0 | 0 | 0 | 1 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 11 | N/A | 0 | 22 | 23 | 0 | 103 | 27 | 44 | 0 |

| A | 115 | 87 | 93 | 110 | 16 | 87 | 70 | 118 | |

| B- | 3 | 4 | 1 | 8 | 0 | 4 | 4 | 1 | |

| B+ | 1 | 5 | 2 | 1 | 0 | 1 | 0 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | |

| C+ | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 |

| Grade | DIF Category | Female Male | Asian White | Black White | Hiapanic White | Native American White | IEP Non-IEP | LEP Non-LEP | Title 1 Non-Title 1 |

|---|---|---|---|---|---|---|---|---|---|

| 3 | N/A | 0 | 5 | 0 | 0 | 66 | 0 | 0 | 0 |

| A | 75 | 64 | 70 | 72 | 10 | 74 | 73 | 75 | |

| B- | 1 | 0 | 2 | 2 | 0 | 2 | 1 | 1 | |

| B+ | 0 | 7 | 3 | 2 | 0 | 0 | 2 | 0 | |

| C- | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 4 | N/A | 0 | 3 | 1 | 0 | 39 | 0 | 0 | 0 |

| A | 77 | 68 | 75 | 77 | 37 | 77 | 77 | 78 | |

| B- | 0 | 2 | 0 | 0 | 0 | 1 | 0 | 0 | |

| B+ | 1 | 4 | 2 | 1 | 2 | 0 | 1 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 5 | N/A | 0 | 2 | 6 | 0 | 41 | 0 | 1 | 0 |

| A | 77 | 68 | 69 | 78 | 35 | 77 | 74 | 78 | |

| B- | 1 | 1 | 1 | 0 | 0 | 1 | 2 | 0 | |

| B+ | 0 | 5 | 2 | 0 | 2 | 0 | 1 | 0 | |

| C- | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 6 | N/A | 0 | 2 | 5 | 0 | 75 | 1 | 0 | 0 |

| A | 76 | 71 | 69 | 75 | 2 | 75 | 75 | 76 | |

| B- | 1 | 0 | 2 | 1 | 0 | 1 | 2 | 1 | |

| B+ | 0 | 2 | 1 | 1 | 0 | 0 | 0 | 0 | |

| C- | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 7 | N/A | 0 | 6 | 6 | 0 | 71 | 3 | 4 | 0 |

| A | 72 | 62 | 67 | 75 | 5 | 69 | 70 | 76 | |

| B- | 1 | 2 | 1 | 0 | 0 | 0 | 2 | 0 | |

| B+ | 2 | 5 | 2 | 1 | 0 | 4 | 0 | 0 | |

| C- | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 8 | N/A | 0 | 9 | 8 | 0 | 73 | 2 | 5 | 0 |

| A | 73 | 60 | 66 | 73 | 2 | 69 | 67 | 74 | |

| B- | 2 | 3 | 0 | 1 | 0 | 2 | 3 | 0 | |

| B+ | 0 | 2 | 1 | 1 | 0 | 2 | 0 | 0 | |

| C- | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 1 | |

| C+ | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | |

| 11 | N/A | 4 | 66 | 35 | 4 | 129 | 76 | 84 | 4 |

| A | 114 | 53 | 91 | 116 | 0 | 50 | 43 | 125 | |

| B- | 5 | 2 | 1 | 5 | 0 | 2 | 1 | 0 | |

| B+ | 5 | 7 | 1 | 4 | 0 | 1 | 1 | 0 | |

| C- | 1 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | |

| C+ | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 |